Building a basic LB

What does building a basic Load balancer look like

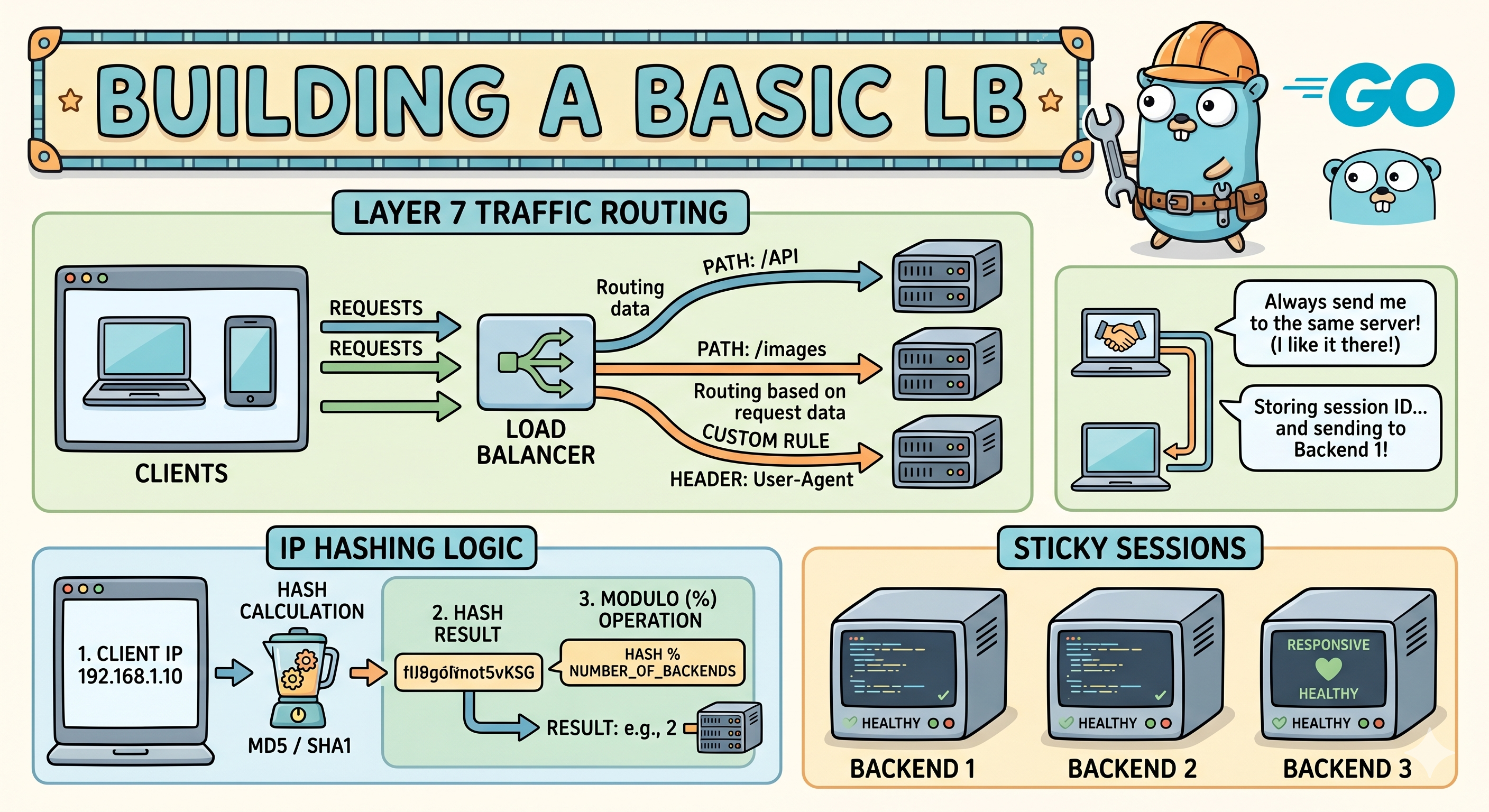

G’day, today I wanted to brush up on some of the basics of load balancers and how they work on a little deeper level. I’m going to cover a brief intro to what the components of a Layer 7 load balancer/application gateway are and what a simple implementation with IP hashing and session stickiness looks like.

Concepts

What is Layer 7 routing?

Unlike a traditional Layer 4 load balancer which looks at IPs and ports a Layer 7 LB operates a higher level closer to the application (The application layer). L7 lbs read the HTTP request including URLs, headers, and cookies to figure out where it needs to go. Since they read the HTTP request they can do smarter routing like sending requests for /api to one set of backend servers and /webstore to another set.

Digging a little deeper a Layer 7 load balancers acts as an intermediary and is often referred to as a reverse proxy. So how does it handle a request? Let’s break it down.

Connection Termination: Unlike Layer 4 load balancing, which simply forward raw network packets, the layer 7 load balancer fully terminates the client’s TCP connection and handles any required TLS decryption.

Deep Inspection: When the connection is established, it reads and parses the HTTP message which gives the load balancer full access to inspect URL paths, HTTP headers, cookies and query parameters.

Routing Decision: The extracted application data is evaluated against a set of configured rules. Like we mentioned before it might check for a certain URL path or for a specific session cookie to determine when to route the request.

Forwarding: Once a routing decision is made the load balancer opens a new, separate TCP connection to the selected backend server (an existing one might be reused via HTTP keepalives) and writes the request to it.

Since there’s a bit more going here than at Layer 4, Layer 7 routing requires more CPU and memory overhead to buffer and parse the application payload. But… the benefits of this are pretty sweet and allows to manipulate HTTP headers, inject cookies for sticky sessions and route traffic based on the content requested.

What’s IP Hashing and why use it to load balance?

IP Hashing or Source IP Hashing work by using the client’s IP address as the key to determine which server receives the request. The load balancer runs the client’s IP through a mathematical hash function and the resulting value dictates the backend server. Here’s a really simple example of this for 3 backend servers,

- Input:

192.168.1.45 - Hash Function: Calculates a deterministic hash value of 1,023,347.

- Calculation: We calculate the remainder based on our number of servers - 1,023,347 (mod 3) = 2

- Routing result: The request is routed the server 2.

Because the hash function is deterministic, the same client IP will always calculate to the same hash result. This deterministic nature guarantees that a specific user is consistently routed to the same backend server for the duration of their session.

The IP hashing approach is great because we get built-in session persistence, easily achieve sticky sessions without needing to parse HTTP cookies, it’s quite simple and straightfoward to implement in code and requires little CPU overhead.

Unfortunately this naive approach suffers from a few negatives at scale. NAT (Network Address Translation) causes users interacting with a site from behind a firewall or similar NATed network to appear to have the exact same public IP address. In this case the load balancer would end up hashing them all to the same backend server rather than properly spreading the load. Another important consideration is the fragility to changes in server backend pools, basic IP hashing relies on the total number of server to calculate the hash. So if a server crashes or you scale up by adding another to the pool… the hash calculation changes for everyone (Consistent Hashing is the solution to this issue).

Making sure we aren’t sending traffic to a dead server

Active health checks are proactive probes (normally just a HTTP GET) that the load balancer periodically sends to its backend pool of servers to make sure they’re alive and ready to receive traffic. These are super important because they make sure load balancers don’t route traffic to a crashed, or unhealthy server.

There’s 3 key concepts here.

- Threshold-Based Transitions: It’s not a great idea to mark a server down after just one single failed check or mark it as up again after just one successful check. It’s much better to require consecutive failures or successes so we can prevent the status of the server from flapping during a few network hiccups.

- Concurrency: When a health check is performed we don’t want to prevent actual work from happening. That’s why concurrency is a great technique to perform them in the background without blocking actual traffic.

- Strict Timeouts: Health checks shouldn’t wait forever, if a backend server is taking too long to respond… that timeout is a health failure.

Putting it all together

Now let’s try and whip something together in Go to demo these basic concepts. In our simple implementation we’ll need a few things. From now on I’ll also use proxy in replacement of load balancer since works better with Go’s definitions.

- Connection and http request management utilities

- State to track which servers are up or down and their URLs.

- Since our will handle many requests at once we’ll need Mutexes for our concurrency requirement.

- Hashing Algorithm for sticky sessions

- Background routines for health checks to make sure they don’t stop the proxy from working.

First we’ll define a struct to hold the URL, proxy instance, and the health status. Bundling these together ensures that when we pick a server, all its tools and its current “health” are in one object.

1

2

3

4

5

6

7

// Backend holds the state of a single server

type Backend struct {

URL *url.URL

Alive bool

mux sync.RWMutex

ReverseProxy *httputil.ReverseProxy

}

SetAlive and IsAlive safely update or read the Alive boolean, we use Lock() and RLock() because the health checker runs in a different thread than the request handler.

1

2

3

4

5

6

7

8

9

10

11

12

13

14

// SetAlive updates the health status

func (b *Backend) SetAlive(alive bool) {

b.mux.Lock()

b.Alive = alive

b.mux.Unlock()

}

// IsAlive returns the current health status

func (b *Backend) IsAlive() bool {

b.mux.RLock()

alive := b.Alive

b.mux.RUnlock()

return alive

}

getHash(ip) is what converts a client IP into our server/index number. fnv does the work here and is computationally cheap to distribute IPs evenly across our servers.

1

2

3

4

5

6

7

8

9

10

11

12

13

14

var serverPool []*Backend

// getHash generates a consistent index based on the IP string

func getHash(ip string) int {

// r.RemoteAddr is usually "192.168.1.1:54321", we need to strip the :54321

host, _, err := net.SplitHostPort(ip)

if err != nil {

host = ip // Fallback if no port is present

}

h := fnv.New32a()

h.Write([]byte(host))

return int(h.Sum32()) % len(serverPool)

}

Very simple health check with a 2 second timeout that runs in a loop to just see if the server is there without downloading the whole page.

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

// HealthCheck pings backends periodically

func healthCheck() {

// Create a client that will give up after 2 seconds

t := &http.Transport{

ResponseHeaderTimeout: 2 * time.Second,

}

client := &http.Client{

Transport: t,

Timeout: 2 * time.Second,

}

for {

for _, b := range serverPool {

res, err := client.Head(b.URL.String())

if err != nil || res.StatusCode != http.StatusOK {

b.SetAlive(false)

} else {

b.SetAlive(true)

}

}

time.Sleep(10 * time.Second)

}

}

proxy.Director Is the hook that intercepts the request before it leaves the proxy. In a more complex implemntation we’d do request inspection, inject headers or even scrub sensitive data here. httputil is also used here instead of writing a proxy from scratch, whilst an it would be an interesting endeavour it’s always better to use a battle-tested implementaion that follows any set out RFC standards than DIYing it. One of the gotcha here is since the LB terminates the connection, the backend server thinks the “client” is the LB’s IP. Setting the X-Forwarded-For header is the industry standard for passing the original user’s IP down the chain.

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

target, _ := url.Parse(s)

proxy := httputil.NewSingleHostReverseProxy(target)

// Message Inspection: Use the 'Director' to modify or inspect the request

originalDirector := proxy.Director

proxy.Director = func(req *http.Request) {

originalDirector(req)

// Help the backend know the real client IP

clientIP, _, _ := net.SplitHostPort(req.RemoteAddr)

req.Header.Set("X-Forwarded-For", clientIP)

log.Printf("[INSPECT] Method: %s | Path: %s | User-Agent: %s",

req.Method, req.URL.Path, req.UserAgent())

}

The proxy handler is where our main logic that recives a request and decides where to send it lives. There’s also some simple fallthrough logic if the hashed server is down to find the next best available.

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

// The Proxy Handler

http.HandleFunc("/", func(w http.ResponseWriter, r *http.Request) {

clientIP := r.RemoteAddr

index := getHash(clientIP)

// IP Hashing logic: Try the hashed server, or find the next alive one

for i := 0; i < len(serverPool); i++ {

targetIdx := (index + i) % len(serverPool)

if serverPool[targetIdx].IsAlive() {

serverPool[targetIdx].ReverseProxy.ServeHTTP(w, r)

return

}

}

http.Error(w, "Service Unavailable", http.StatusServiceUnavailable)

})

Finally it’s important to understand that http.ListenAndServe automatically spawns a new goroutine for every request. It’s because of this that our sync.RWMutex in the backend struct is vital as multiple threads will be hitting IsAlive() simultaneously.

1

log.Fatal(http.ListenAndServe(":8000", nil))

Single Go file implementation

Here's the whole file put together

package main

import (

"fmt"

"hash/fnv"

"log"

"net/http"

"net/http/httputil"

"net/url"

"sync"

"time"

)

// Backend holds the state of a single server

type Backend struct {

URL *url.URL

Alive bool

mux sync.RWMutex

ReverseProxy *httputil.ReverseProxy

}

// SetAlive updates the health status

func (b *Backend) SetAlive(alive bool) {

b.mux.Lock()

b.Alive = alive

b.mux.Unlock()

}

// IsAlive returns the current health status

func (b *Backend) IsAlive() bool {

b.mux.RLock()

alive := b.Alive

b.mux.RUnlock()

return alive

}

var serverPool []*Backend

// getHash generates a consistent index based on the IP string

func getHash(ip string) int {

// r.RemoteAddr is usually "192.168.1.1:54321", we need to strip the :54321

host, _, err := net.SplitHostPort(ip)

if err != nil {

host = ip // Fallback if no port is present

}

h := fnv.New32a()

h.Write([]byte(host))

return int(h.Sum32()) % len(serverPool)

}

// HealthCheck pings backends periodically

func healthCheck() {

// Create a client that will give up after 2 seconds

t := &http.Transport{

ResponseHeaderTimeout: 2 * time.Second,

}

client := &http.Client{

Transport: t,

Timeout: 2 * time.Second,

}

for {

for _, b := range serverPool {

res, err := client.Head(b.URL.String())

if err != nil || res.StatusCode != http.StatusOK {

b.SetAlive(false)

} else {

b.SetAlive(true)

}

}

time.Sleep(10 * time.Second)

}

}

func main() {

// 1. Define our imaginary servers

serverUrls := []string{

"http://127.0.0.1:8081",

"http://127.0.0.1:8082",

"http://127.0.0.1:8083",

}

for _, s := range serverUrls {

target, _ := url.Parse(s)

proxy := httputil.NewSingleHostReverseProxy(target)

// Message Inspection: Use the 'Director' to modify or inspect the request

originalDirector := proxy.Director

proxy.Director = func(req *http.Request) {

originalDirector(req)

// Help the backend know the real client IP

clientIP, _, _ := net.SplitHostPort(req.RemoteAddr)

req.Header.Set("X-Forwarded-For", clientIP)

log.Printf("[INSPECT] Method: %s | Path: %s | User-Agent: %s",

req.Method, req.URL.Path, req.UserAgent())

}

serverPool = append(serverPool, &Backend{

URL: target,

Alive: true,

ReverseProxy: proxy,

})

}

// 2. Start health checks in the background

go healthCheck()

// 3. The Proxy Handler

http.HandleFunc("/", func(w http.ResponseWriter, r *http.Request) {

clientIP := r.RemoteAddr

index := getHash(clientIP)

// IP Hashing logic: Try the hashed server, or find the next alive one

for i := 0; i < len(serverPool); i++ {

targetIdx := (index + i) % len(serverPool)

if serverPool[targetIdx].IsAlive() {

serverPool[targetIdx].ReverseProxy.ServeHTTP(w, r)

return

}

}

http.Error(w, "Service Unavailable", http.StatusServiceUnavailable)

})

log.Println("Reverse Proxy started at :8000")

log.Fatal(http.ListenAndServe(":8000", nil))

}